Author: Wesley Renshaw – Lead Consultant

Part one looked at the objectives of the Red Team assessment, the team carried out reconnaissance and achieved an initial foothold into the client environment, where privileges were escalated to compromise the Asia internal network. Part two continues on from the Asia network with how the team eventually achieved the objectives.

Lateral Movement

With access to passwords and hashes from user accounts within the Asia forest the next step was figuring out how this information could be leveraged to gain a foothold into the UK domain, as this is where the main target and objectives were. A few approaches could possibly be taken here, such as enumerating whether a trust relationship exists between the Asia forest and the root UK forest. Upon review of these Active Directory (AD) environments, it did not seem like a trust relationship existed between the two forests, however an “external” trust which is “non-transitive” by default between the UK domain and the Asia domain did exist, however it was only one-way. This meant that only those from the UK domain could authenticate to resources in the Asia domain but not the other way around.

Another approach was to cross reference which privileged accounts within the Asia Forest also had privileged accounts within the root UK forest, in the hope that maybe password re-use exists again. By taking this approach it was possible to identify a Domain Administrator account that was re-using the same password between their privileged account within the Asia forest and the root UK forest. Although it was not possible to crack the password hash of this account, it was possible to take the NTLM hash and use this to authenticate against the UK domain controller and execute commands remotely using the tool “Invoke-WMIExec.ps1” by Kevin Robertson. An example of this is shown below with details redacted or renamed:

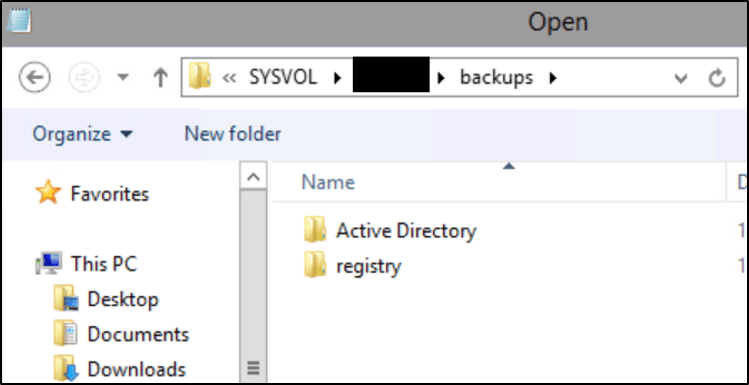

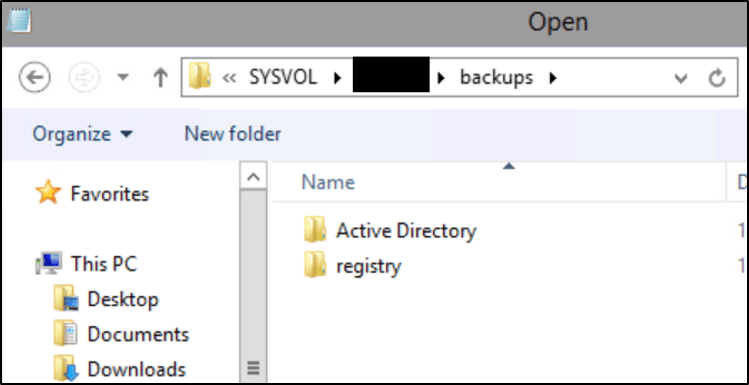

Invoke-WMIExec -Target uk-dc -Domain test.uk -Username privadmin -Hash 192C************************E2A1 -Command 'ntdsutil "ac i ntds" "ifm" "create full \\uk-dc\SYSVOL\test.uk\backups" q q'

Using the same exfiltration methods as previously for the Asia domain, the NTDS.dit database was copied out via the XenApp server.

Now that the UK domain AD database has been retrieved and copied over, the fear of losing access to the XenApp server was alleviated a little bit, although access was still valid at this point of the assessment. The team then performed offline password cracking against the UK domain users. Password cracking techniques deserve another separate blog post however common techniques such as wordlist mangling and common password rules such as KoreLogic rules can be leveraged. For information relating to password auditing, see the following blog posts:

Active Directory Password Auditing Part 1 – Dumping the Hashes

Active Directory Password Auditing Part 2 – Cracking the Hashes

Active Directory Password Auditing Part 3 – Analysing the Hashes

By combining common password cracking approaches, it was possible to crack a large number of domain users including privileged accounts such as members of the “Domain Admins” group for the UK domain. With access to valid domain user accounts the next phase of gaining a foothold into the UK domain can begin.

UK Internal Network Foothold

The reconnaissance phase of the assessment is extremely important in understanding and achieving the objectives outlined prior to the test, for instance information gathered from the reconnaissance stage can assist in navigating through the internal networks that have been compromised. In this instance, the information from the reconnaissance phase provided details of VPN endpoints that could be used to provide a foothold into the UK network assuming that valid credentials for a user within the relevant groups was obtained. The password cracking techniques used against the UK domain database revealed a number of users that were valid for UK and UK privileged VPN groups.

As the VPN endpoints did not utilise a multi-factor authentication (MFA) solution, it was possible to take the username and password of the UK domain accounts and log into the UK network, establishing internal access to the UK network. Prior to connecting to the VPN, the WORKGROUP and computer name of a test laptop used were updated to mimic similar naming conventions of the other computers within the UK network. Although crude, it provided a way to potentially blend into the UK network.

The team have now gained an internal foothold into the UK network, completing one of the objectives, and the team could now target the other objectives.

Targeting Objectives

The initial reconnaissance phase revealed that the client heavily used Google G-Suite and Google Cloud Platform for business operations, and this was still a problem for the Dionach team to compromise even with “Enterprise Administrator” privileges globally. The issue involved gaining access to Google G-Suite for privileged users and other users where the objectives were relevant, without alerting the SOC and by bypassing MFA. A few approaches were carried out, one involved adding a domain user to relevant domain groups which would later be synchronised into Google G-Suite Directory or stealing active session cookies from users that were logged into Gmail and Google Cloud Platform.

Google G-Suite Directory and Active Directory Synchronisation

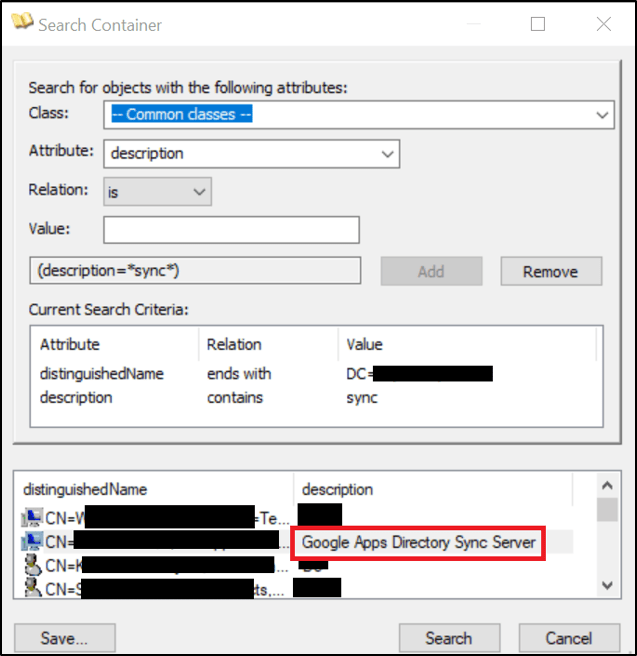

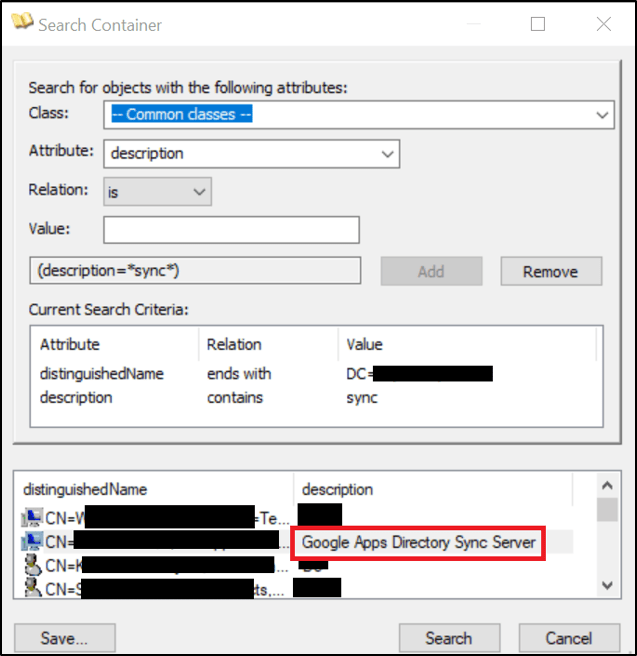

The initial idea would be to discover which servers within the UK network were responsible for performing the synchronisation between Active Directory and Google G-Suite Directory. To accomplish this, the snapshot of Active Directory carried out in phase “Asia Internal Network Foothold” was heavily used to perform offline queries against AD objects such as computers that had “Sync” within their “description” property. An example query and screenshot are provided below:

From the screenshot above a couple of servers are shown, by using the same trick previously discussed in the “Escalation of Privileges” section in part one it is again possible to determine who is actively logged onto the servers. A non-active privileged domain user was used to remotely access the server responsible for performing Google synchronisation. Upon inspection of the XML profiles used by the Google Cloud Directory Sync tool “sync-cmd”, it appeared at first to be possible to add our newly created domain user that was part of a specific group that had been referenced throughout other XML profiles in an attempt to get the domain user synchronised with G-Suite. From here, the domain user could then potentially be added to relevant groups that were required to achieve the objective, such as a group that has Administrator privileged within G-Suite. Unfortunately for the team this approach was not as straightforward to carry out, as it seemed only groups could be synchronised and due to potential complexities and issues that may occur, another approached discussed next was considered.

Stealing Session Cookies Remotely from Google Chrome

One approach that was considered was stealing the session cookies of the Google accounts from remote systems where interesting domain users with privileges are currently logged in. A number of tools exist for identifying currently logged on users, such as Invoke-StealthUserHunter from the PowerSploit framework, or SharpView the C# implementation. Due to the size of the networks these tools took too long, however using the ADExport information again and searching over the description property for computer objectives within the container for the Head office in the UK it was possible to identity interesting users.

With the name of the user, it is possible to then run SharpView with the “Get-DomainUser” switch and identify which groups the user is a part of in order to determine whether they would be a useful target to accomplish the objectives. Now that a user had been identified the next step was figuring out how to enumerate their session cookies remotely. Luckily the hard work has already been done by a number of people such as Benjamin Delpy and Will Schroeder regarding the Windows Data Protection API (DPAPI) and offensive tooling to leverage this. The DPAPI provides a way to encrypt and decrypt data using cryptographic keys relating to the domain user or system. The Google Chrome browser will specifically store cookie information and encrypt the cookie value using DPAPI and the user’s master key. The key is protected by the user’s password or domain backup key, which is required to decrypt the cookie values.

In order to get the session cookies from the user that was identified previously, either the user’s password or domain backup key is required. A problem does however exist when needing to decrypt this information from a remote computer, as the user’s password cannot be used, and administrative privileges are required on the target. However since “Enterprise Administrator” access was obtained in section “Escalation of Privileges” in part one, it is possible to retrieve the domain backup key from the domain controller and use this to remotely extract session cookies from Google Chrome browsers and then decrypt them. The following command can be used to extract the domain backup key:

SharpChrome backupkey /server:uk-dc.test.uk /file:key.pvk

Once the domain backup key has been extracted, this can then be passed into SharpChrome to triage cookies remotely:

SharpChrome cookies /server:wk-uk.test.uk .pvk:key.pvk /url:google /format:json > cookies.json

The command above will also perform a search with regular expressions against any URLs matching “google” since only Google related websites the user has visited are of interest. Additionally, the output format can be in JSON format for use with plugins such as “EditThisCookie”.

Using this approach, it is possible to leverage another user’s session cookies and bypass MFA and gain access to the user’s Gmail, spreadsheets, Drive and Google Cloud Platform.

A few issues did occur when importing cookies, however the exact reason was never understood, issues arose potential due to cookies no longer being valid and cookies being reliant on other cookies in order to maintain a valid session state. After a lot of trial and error the correct cookies were configured and session hijacking of user accounts to access Google’s G-Suite, Drive, Cloud Platform and other products were achieved.

Persistent Access

Throughout these phases of the assessment no actual attempt has been made to develop persistence within the client’s networks due to having VPN access which would not be trivial to remove. However, the assessment should demonstrate some capability of developing and maintaining persistence within the target network. The approach taken leveraged the VPN access to pivot through and execute Cobalt Strike Beacons against servers and workstations. As the test laptop with the VPN connection was controlled by the team it was simple to just disable the antivirus software (AV) and get a Beacon running. Before the Beacons could be injected into targets, some infrastructure had to be configured and tested. Discussions around red team infrastructure is beyond the content of this blog post and has been covered in numerous other blog posts and conference talks, and is documented extremely well by Steve Borosh and Jeff Dimmock in the Wikipedia page below:

https://github.com/bluscreenofjeff/Red-Team-Infrastructure-Wiki

However, a redirector was created to hide the team server from the Internet and acted as a proxy to redirect actual C2 traffic back to the team server and other traffic somewhere else, such as the client’s website. With the infrastructure standing, Beacons could successfully communicate back to the team server through the redirector server. In order to compromise client systems within the UK network, certain settings within Cobalt’s profile had to be configured for instance the “set amsi_disable;” flag to true, to disable AMSI, as the client used a particular AV vendor that leveraged the AMSI engine to identify malicious strings. More information relating to Malleable C2 profiles can be found at the URL below:

https://posts.specterops.io/a-deep-dive-into-cobalt-strike-malleable-c2-6660e33b0e0b

To compromise servers within the UK network using a modified Malleable C2 profile, the “Targets” tab within Cobalt Strike was populated with the servers to target. Then using the lateral movement features such as WMI in Cobalt Strike it is possible to execute a payload that will suspend a process and inject a HTTPS Beacon into it and resume the process again, resulting in the Beacon shellcode executing. The way in which this works is by executing a Base64 encoded payload using PowerShell, which is invoked using WMI on a remote system. Typically using PowerShell type payloads within an environment that has mature logging and detection capabilities would be dangerous as it is an obvious indicator that something isn’t quite right. The reason PowerShell was used in this instance was firstly due to assuming that the SOC were not monitoring and alerting on the use of PowerShell within the networks and secondly by evading AV alerts since AMSI could be disabled. From here it was possible to leverage the privileged credentials obtained from the “Escalation of Privileges” section in part one and perform remote code execution tasks such as executing payloads using WMI. Certain servers were correctly configured to not permit outbound internet traffic, which required the team to use SMB named pipe pivots that would run through another Beacon, where the Beacon was communicating to the team server via the redirector.

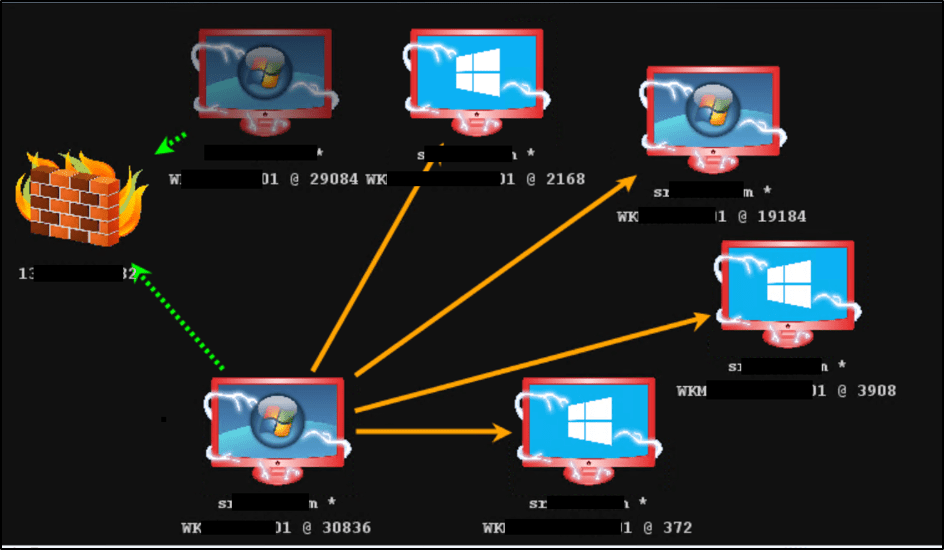

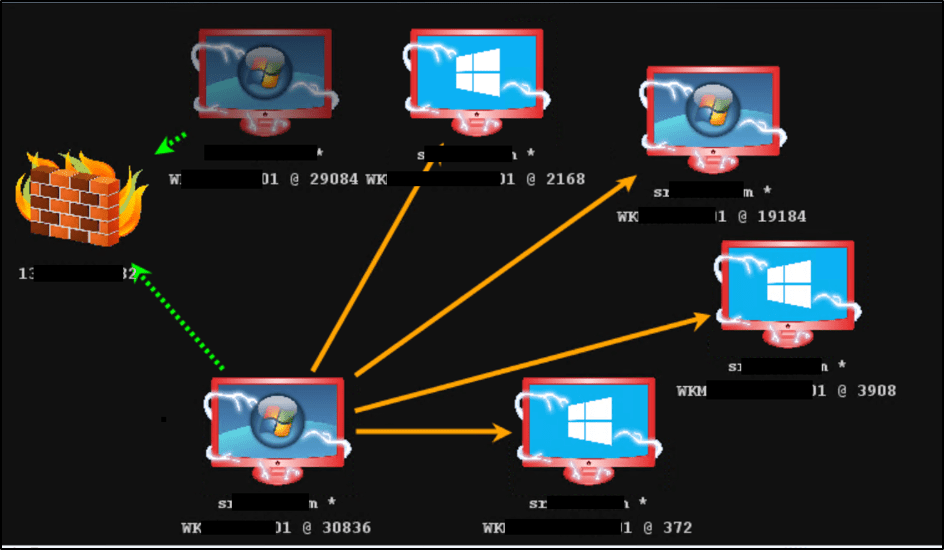

The Cobalt Strike diagram below provides an overview of the persistence achieved during the assessment:

The green arrows show the HTTPS Beacon that is egressing out through the UK network to the team server via the redirector, and the orange arrows demonstrate the SMB pivoting access gained.

Obvious Actions

The P1 incident discussed in section “Escalation of Privileges” in part one, was the last incident raised by the organisation’s SOC, all other actions past that incident had gone unnoticed. These types of assessment aren’t about “winning” or “defeating” the SOC or Blue Team, these assessments are about trying to help the analysts and the organisation improve their detection and response capabilities. As such, once the objectives are completed it can help to carry out more obvious or blatant attacks to generate more logs and alerts and help the SOC carry out their incident response procedures and practice. These attacks consisted of the following:

- Attempting to run Mimikatz on servers to generate AV alerts and logs

- Adding domain users to privileged domain groups such as “Domain Admins”

- Disconnecting Administrators from their RDP sessions.

A lot more could have been done to provide further assistance in terms of generating logs and alerts, however the assessment was almost over at this point, so the above was just a quick example of things which are common types of attacks.

Conclusion

Overall the assessment was a success for both the client and Dionach as the client is now able to demonstrate real risk to their board and get more investment for the coming year for their IT budget, to focus on improving detection and response capabilities. Additionally, the client can focus on resolving issues relating to legacy systems and processes.

The Red Team assessment carried out in this instance did not include any form of threat intelligence as the test was more objective based with simulated tactics, techniques and procedures (TTPs) found in common threat groups. However, a requirement for intelligence-led Red Teaming exercises must include threat intelligence in order to simulate threat actors the client is currently facing. Dionach provides this form of testing through its CREST STAR services. For more information on Dionach’s Red Team and intelligence-led Red Team services please see the following link:

Red team security assessment